Google Content Evaluation Standards 2026

In February 2026, Google did something it had never done before. The question is whether your content was ready for it — or whether you found out the hard way.

Primary sources: Google QRG Sept 2025 PDF · Google Search Central Blog · ALM Corp (847 sites, Jan 2026) · Wellows.com (15,847 AI Overview results)

On February 5, 2026, Google announced a core update targeting Google Discover exclusively. Not Search. Not the full index. Discover only. In 22 years of documented algorithm updates, this had never happened. It was completed on February 27.

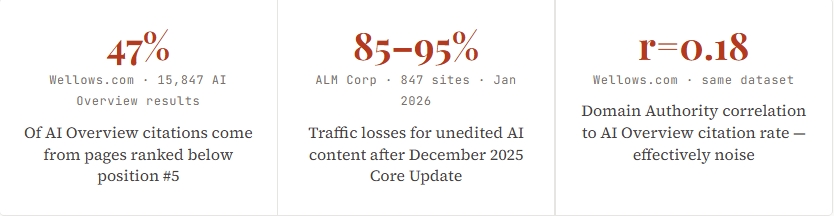

Two months earlier, the December 2025 Core Update finished rolling out and left a specific kind of wreckage. Generic AI content sites lost 85–95% of their traffic. Lightly-edited AI with minimal human input dropped 60–80%. Meanwhile, AI-assisted content built around genuine expert oversight performed well — same update cycle, opposite outcomes. ALM Corp · 847 sites · 23 industries · Jan 2026

And here’s the number that should change how you think about all of it: 47% of Google AI Overview citations come from pages ranked below position #5. Wellows.com · 15,847 AI Overview results Not the pages ranking highest. Not the most-linked domains. Pages that, by traditional SEO metrics, shouldn’t be winning at all. The game changed. Most teams haven’t updated their playbook.

This article explains exactly what Google evaluates in 2026 — the three-layer framework that governs content quality assessment, what changed in the September 2025 QRG update, and the two major 2025–2026 core updates, and what your content production process needs to look like against these standards. With numbers. With specific QRG section references. Without the vague advice filling every other article on this topic right now.

Domain Authority correlation to AI Overview citation rate — effectively noise

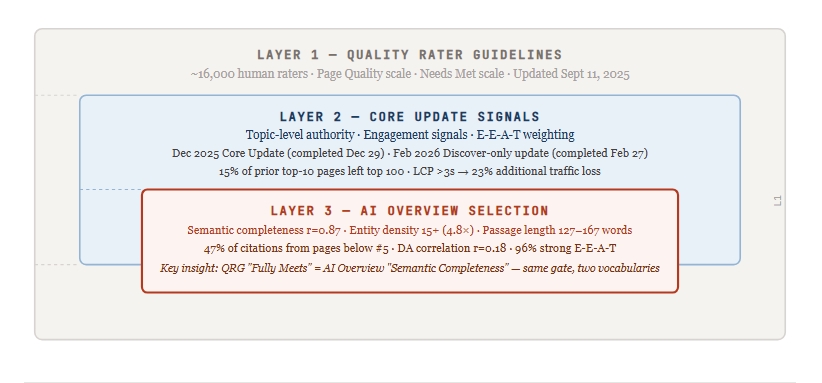

The Three-Layer Evaluation Model Google Uses in 2026

The Three-Layer Framework. Each layer has its own evaluation cycle, primary metric, and gate. No competing article integrates all three. Sources: Google QRG Sept 2025; Google Search Central Blog Feb 2026; ALM Corp (847 sites); Wellows.com (15,847 AI Overview results — industry study, directional).

Layer 1 — Quality Rater Guidelines: The Human Standard That Trains the Machine

Roughly 16,000 contracted human raters apply Google’s Quality Rater Guidelines globally. Their scores don’t directly change individual rankings — they train Google’s systems, calibrate what “quality” means algorithmically, and shape the signals the algorithm learns to weight. The QRG is public, downloadable, and 182 pages as of the September 11, 2025, update.

Raters score every page on two scales. Page Quality runs five levels: Lowest, Low, Medium, High, and Highest. The gap between High and Highest isn’t minor — it separates content that’s good from content that demonstrates genuine expertise, first-hand experience, and original analysis that couldn’t be replicated by aggregating existing sources.

The second scale — Needs Met — has six levels: Fully Meets, Highly Meets, Moderately Meets, Slightly Meets, Fails to Meet, and Unratable. The one that matters most for AI Overview selection: content rated “Moderately Meets” will not be selected for AI Overview,s regardless of other quality signals. The threshold for AI visibility is “Highly Meets” at a minimum. That’s an editorial standard, not a technical one.

The September 11, 2025, QRG Update — What Actually Changed

Google described it as a “minor update” with no change to overall rating guidance. The PDF grew from 181 to 182 pages. Two additions matter for content strategy.

First: YMYL got a new sub-category. The September 2025 edition added “YMYL Government, Civics & Society,” explicitly including elections, voting information, trust in public institutions, and civic content. Any content touching these topics now carries the same high-scrutiny standards applied to medical, financial, and legal content.

Second: for the first time in QRG history, the document added explicit criteria for evaluating AI Overview responses — not just web pages. Raters can now score AI-generated summaries against the same quality rubric as the source content. The quality bar for AI Overview citation isn’t lower than the bar for ranking. It’s the same bar applied twice.

Layer 2 — Core Update Signals: Verified Outcomes from December 2025 and February 2026

December 2025 Core Update (Completed December 29)

SE Ranking‘s SERP analysis found that 15% of pages previously ranking in the top 10 disappeared from the top 100 entirely after this update. ALM Corp’s analysis of 847 sites across 23 industries identified three content types by outcome:

| “Performed well” is an aggregate. Some sites in this category still saw volatility. Update behaviour is not perfectly predictable. | December 2025 Outcome | Mechanism | ⚠ Limitation of This Data |

|---|---|---|---|

| Unedited AI output at scale | 85–95% traffic loss | QRG §4.6.5 Scaled Content Abuse + §4.6.6 Low-Effort MC — Lowest rating triggers at scale | ALM Corp is a named agency, not an independent academic audit. 847 sites is meaningful but self-selected. These are directional figures. |

| Lightly edited AI, minimal human input | 60–80% traffic loss | Scores Low on Page Quality — no original analysis or first-hand experience; fails §4.6.6 effort threshold | Same sourcing limitation. “Minimal human input” is ALM Corp’s characterisation, not a defined technical threshold. |

| AI-assisted with expert oversight and original insight | Performed well | Satisfies QRG E-E-A-T criteria: experience demonstrated, expertise verifiable, trust signals present | “Performed well” is aggregate. Some sites in this category still saw volatility. Update behaviour is not perfectly predictable. |

| Sites with LCP > 3.0s vs. faster peers with equivalent content | 23% more traffic loss | Technical quality now functions as a competitive differentiator, not just a hygiene floor | Controlling for “equivalent content quality” in real-world data is methodologically difficult. Treat as directional. |

Source: ALM Corp analysis of 847 sites across 23 industries (January 2026); SE Ranking SERP analysis (December 2025 Core Update). Directional = named source, not independently audited; treat as strongly suggestive, not proven causal.

February 2026 Discover Core Update — The Historic First

Google’s February 2026 update is genuinely novel. It’s the first core update in Google’s public history to target Google Discover exclusively — not organic Search. If your Search Console traffic held steady while Discover traffic dropped after February 5, this is why. The two are now evaluated and updated on separate tracks.

Google’s Search Central Blog stated three specific goals: more locally relevant content from country-based sites; reduced sensational and clickbait content; and more in-depth, original, and timely content from sites with demonstrated topic expertise — evaluated topic by topic, not at the domain level. That last phrase matters most. Your domain’s overall authority no longer carries a topic where you lack depth. Every topic cluster earns its place independently.

“Demonstrated topic expertise, evaluated topic by topic” — Google’s stated goal for the February 2026 Discover update means domain authority no longer subsidizes weak content in adjacent topics.Editorial synthesis — source: Google Search Central Blog, February 2026; Google Search Liaison confirmation

Layer 3 — AI Overview Selection: The Top-of-SERP Citation Battleground

AI Overviews now appear in over 60% of all searches. Pages cited in AI Overviews earn 35% more organic clicks than non-cited pages with equivalent rankings. Search Engine Land, 2025. But here’s the catch: AI Overviews also reduce click-through rates by 34.5% on average for searches where they appear. Ahrefs, cited by eMarketer You want to be the cited source. Being ranked but not cited is the worst position — your content appears in a landscape where clicks have declined, and you’re getting none of the citation benefit.

Wellows.com‘s analysis of 15,847 AI Overview results across 63 industries industry study; directional, not peer-reviewed, identified seven correlated citation factors. The five most actionable:

| Named, Google Knowledge Graph-recognized entities — people, organizations, concepts, places — each in a meaningful context. Not keyword density. | Correlation / Multiplier | What It Means in Practice | ⚠ Adversarial — Limitation |

|---|---|---|---|

| Semantic Completeness | r=0.87 · 4.2× boost | Self-contained passages (127–167 words) that fully answer one specific question without requiring surrounding context. Practical target: 140–155 words. | Two analyses disagree on range: Wellows (134–167) vs. AI Mode Boost (127–156). Use 140–155 as convergence zone. Neither source is peer-reviewed. |

| Multi-Modal Integration | r=0.92 · highest factor | 78% of AI Overview-featured sources combine text + image + structured data. Minimum: one original diagram, one data table, structured schema. | Correlation likely reflects overall content investment, not a direct multi-modal signal. Adding images to thin content without depth won’t replicate this. |

| Entity Density (15+ recognized entities) | 4.8× selection probability | Named, Google Knowledge Graph-recognized entities — people, organizations, concepts, places — each in meaningful context. Not keyword density. | Effective threshold varies by niche. Medical and legal content may require higher counts. The 15+ figure is an aggregate across 63 industries. |

| E-E-A-T Signals | 96% of citations | 96% of AI Overview-cited sources demonstrate strong E-E-A-T. Necessary condition — not a sufficient one. | E-E-A-T is proxied by Wellows’s own rubric, not Google’s official QRG criteria. May not map perfectly to rater assessments. |

| Domain Authority (DA) | r=0.18 · lowest factor | Near-zero predictive value for AI Overview citation rates. The metric most SEO teams prioritize has the weakest AI-era signal. | DA still correlates with traditional blue-link rank position. The r=0.18 applies specifically to AI Overview selection, not traditional rankings. |

Source: Wellows.com analysis of 15,847 AI Overview results across 63 industries (industry study; not peer-reviewed; treat all figures as directional). r = Pearson correlation coefficient between factor score and AI Overview selection rate. “4.8×” = selection probability for pages with 15+ entities vs. <15. Convergence zone (140–155 words) = overlap of Wellows (134–167) and AI Mode Boost (127–156).

Cross-Source Synthesis — Not Present in Any Single Cited Source

The QRG’s “Needs Met: Fully Meets” standard requires content that is immediately satisfying — answering the query so completely that no further search is needed. The AI Overview’s top citation factor is Semantic Completeness (r=0.87): content that provides a self-contained answer without requiring external references.

These are the same quality standards described in two evaluation vocabularies. The QRG rubric, written for human raters, and the AI Overview selection algorithm converge on identical content characteristics: directness, completeness, and absence of filler. Building for one builds for both. Neither source makes this connection directly — it requires both datasets together.

E-E-A-T in 2026 — What Has Mechanically Changed

You’ve read the definitions. Experience, Expertise, Authoritativeness, Trustworthiness. Four bullet points. Every SEO article from 2022 onwards has them. Skip it. What matters now is what Google’s systems read as proxies for each — because the QRG tells raters exactly what to look for, and those instructions tell you what to build.

Experience: Now the Hardest Pillar to Fake Algorithmically

The QRG’s Experience evaluation looks for specific structural signals, not claimed credentials. Three that raters are explicitly trained to identify:

Temporal specificity in outcomes. “We changed the CTA from ‘Learn More’ to ‘Get Your Free Quote’ in Q3 2025 and saw a 34% lift in form completions,” reads as experience. “Changing your CTA can improve conversions,” reads as aggregated knowledge. The specific quarter, the specific delta, the named actor — these are structural signals that a human rater (and increasingly an algorithm trained on those ratings) recognises as first-hand.

Named scenarios only an insider would know. The specific failure mode of a tool that only someone who ran it in production would encounter. The edge case that contradicts the official documentation. The counterintuitive finding from a specific dataset. These aren’t things you can source from other articles — which is the point.

The rater’s instructions on exaggerated credentials. The QRG explicitly states: “If you find the information about the website or the content creator to be exaggerated or mildly misleading, the Low rating should be used.” Claiming expertise you can’t substantiate isn’t neutral — it’s a Low rating trigger. Author schema with links to external publications, speaking records, and verifiable credentials matters not because the schema itself signals quality, but because it gives raters (and Google’s systems) a way to verify the claims.

Trustworthiness: The Foundation That Weights Every Other Signal

Trust isn’t one signal — it’s the multiplier on all the others. A page with high Experience signals and low Trust signals scores lower than a page with moderate Experience and high Trust. The QRG’s trust evaluation runs through four channels: the page’s main content itself, the site’s reputation off-page, verifiable credentials disclosed on the page, and technical trustworthiness (security, editorial standards, correction policies).

The practical trust-building stack for 2026: named author with a Google-indexable bio page linked via Article schema; at least five external sources cited with named attribution, at least two being primary sources; a visible correction or update policy; HTTPS and Core Web Vitals at passing thresholds. The part most teams skip is the author entity — specifically, the off-site citation pattern, the mentions in other authoritative sources, and the entity co-occurrence in the Knowledge Graph. Schema is not trusted. It’s a pointer toward verifiable trust signals that exist elsewhere.

“96% of AI Overview citations come from sources with strong E-E-A-T signals. It’s not a nice-to-have. It’s the entry condition.”Editorial synthesis — sources: Wellows.com (15,847 AI Overview results), Google QRG September 2025 edition

Cross-Source Synthesis — The DA / Entity Density Paradox

Domain Authority correlates with AI Overview citation rate at r=0.18 — the weakest of the seven factors Wellows tracked. Entity density (15+ recognized entities per page) boosts AI Overview selection probability by 4.8×. Most SEO teams track Domain Authority as a primary KPI, and entity density rarely, if at all.

The paradox: the metric receiving the most investment has the weakest signal for AI-era visibility. The metric receiving almost no systematic investment has the single highest citation multiplier. This conclusion requires both data points plus knowledge of what SEO teams actually prioritize — neither Wellows nor any single source states it directly.

AI-Generated Content and Google’s 2026 Stance — The Honest Picture

The Three QRG Failure Modes — Exact Section Language

The September 2025 QRG identifies four specific abuse types in sections §4.6.3 through §4.6.6. Three are directly relevant to AI content strategy:

§4.6.4 — Site Reputation Abuse. Third-party content is published on a high-authority host to exploit its established ranking signals. The risk isn’t just a spam penalty — it’s that the third-party content misleads users into believing editorial standards were applied when they weren’t. Publishers hosting AI-generated partner content without review face this exposure specifically.

§4.6.5 — Scaled Content Abuse. “Many pages are generated for the primary purpose of manipulating search rankings and not helping users. This abusive practice is typically focused on creating large amounts of unoriginal content that provides little to no value for website visitors.” Scale alone is not the issue. Scale without originality or user value is.

§4.6.6 — Low-Effort MC. This is the catch-all. Google’s exact language: “The Lowest rating applies if all or almost all of the MC on the page (including text, images, audio, videos, etc) is copied, paraphrased, embedded, auto or AI generated, or reposted from other sources with little to no effort, little to no originality, and little to no added value for visitors to the website. Such pages should be rated Lowest, even if the page assigns credit for the content to another source.”

The last sentence matters. Attributing AI-generated content to a human author doesn’t neutralize the §4.6.6 risk. The rating applies to the content regardless of how its origin is disclosed.

“Little to no effort, little to no originality, little to no added value.” That’s the Lowest rating trigger. Three thresholds. Your content needs to clear all three.QRG §4.6.6, September 2025 edition — as reported by Search Engine Land and confirmed in John Mueller presentations

The Finding That Complicates the Main Thesis

Finding That Works Against the Main Thesis — Not Minimized

The December 2025 Core Update’s most surprising loser wasn’t a content farm. It was Wikipedia that lost over 435 SISTRIX visibility index points in the same update cycle. Wikipedia is simultaneously the most-cited source in AI Overviews globally (1,135,007 mentions, 11.22% of all AI Mode citations — Ahrefs AI Mode analysis, 2025) and a major loser in traditional search rankings.

The implication: the relationship between content quality, topical authority, and algorithmic reward is messier than any unified framework suggests. Building high-quality content is necessary but not sufficient. Core updates introduce volatility that even the best content strategy can’t fully immunize against. Plan for volatility, not just for quality.

What actually works — per ALM Corp’s analysis of the same 847 sites — is AI-assisted content with expert oversight and original insight. Same update cycle, completely different outcome from unedited AI. The problem has never been the tool. It’s been the absence of human editorial judgment. Google doesn’t penalize assistance. It penalizes the absence of effort, originality, and added value, which the QRG defines precisely in §4.6.6, and which December 2025 demonstrated at scale.

Pre-Publication Quality Gate — 12 Checkpoints Verified Against 2026 Standards

Every item below has a specific threshold. Not “ensure content is high quality.” Specific enough that an editor who just produced the wrong thing can point to the mistake.

Content-Level Checks

- 01Passage architecture: Each topical passage is 127–167 words (target 140–155) and answerable standalone — a reader who sees only that passage gets a complete answer to one specific question.

- 02Entity density: The page contains 15+ named, Google Knowledge Graph-recognized entities (people, organizations, concepts, places), each in meaningful context. Count them. Not keywords — entities.

- 03Needs Met targeting: Before writing, declare the Needs Met target: “Fully Meets” or “Highly Meets.” If you can’t state which specific query this article fully answers, the brief isn’t ready.

- 04Originality gate (QRG §4.6.6): At least one named, verifiable example with a specific outcome, date, and named actor. This is what satisfies the “effort and originality” requirement. Aggregated advice without named examples fails it.

- 05AI Overview structure: At least 5 passages structured as: question → direct answer → supporting evidence. The FAQ schema applied to each. These are your citation candidates.

- 06Source quality: ≥5 external sources cited with named attribution. At least 2 are primary sources (government docs, official research, platform announcements) — not other blogs summarizing those sources.

Technical-Level Checks

- 07Core Web Vitals — current thresholds: LCP <3.0s. INP <200ms — INP replaced FID as a Core Web Vitals metric in March 2024. If your audit stack still checks FID, update it. CLS <0.1. Verify in field data via CrUX in Search Console, not only Lighthouse lab scores.

- 08Schema coverage: Article schema (name, author, datePublished, dateModified, publisher). FAQ schema on all H3/H4 question-format sections. BreadcrumbList. SpeakableSpecification on definitional paragraphs. Validate via Google’s Rich Results Test before publishing.

- 09Author entity: Named author has a Google-indexable bio page. The article schema includes an author entity with credentialOf or sameAs links to external profiles. Schema is a pointer — not the trust signal itself.

- 10Multi-modal: At minimum one original diagram + one data table with an adversarial column (a column that states why the evidence might not apply to the reader’s context). Alt text on all images — accessibility and crawl signal.

- 11Vintage compliance: Any data older than 3 years needs its continued validity explicitly argued — state both the original publication year and the most recent update year, then explain why the underlying mechanism hasn’t changed enough to invalidate the figure.

- 12§4.6.6 self-test: Read the Lowest rating definition from QRG §4.6.6. Apply it to your article. If any section could be characterised as “little effort, little originality, little added value” — rewrite before publishing.

The metric to stop tracking: Domain Authority (r=0.18 to AI Overview citation rate). Replace with: AI Overview inclusion rate, entity visibility score, and topic-cluster semantic completeness measured via content gap analysis against AI Overview citation patterns in your niche. A dashboard that reports DA prominently but doesn’t track AI Overview inclusion is optimizing for 2019 signals.

What This Means for Your Role

For: Content Strategists and Editors

The QRG’s Needs Met Scale Is Your New Editorial Rubric

The reframe: The question isn’t “is this content good?” It’s which of the six Needs Met levels your content hits for a specific query. Content rated “Moderately Meets” won’t be selected for AI Overviews regardless of any other signal. “Highly Meets” is the minimum for AI visibility. That’s an editorial threshold — set before writing, not evaluated after. Map every article to a specific query before the brief is written, because the query determines the Needs Met target, which determines the required passage architecture.

What you do: Before any brief is approved, write one sentence: “This article is the definitive answer to [specific query] for [specific user situation].” If you can’t write that sentence, the brief isn’t ready. Then read QRG Chapter 2 (Needs Met) and Chapter 4 (Page Quality) directly — not a summary. The PDF is at guidelines.raterhub.com. Assign those chapters as required reading for anyone writing briefs.

Access barrier: Most editorial teams haven’t read the QRG directly. The 182-page document looks like regulatory documentation. It isn’t — it’s a detailed description of what your audience’s satisfaction looks like to the people training Google’s systems. The relevant sections for editors are under 40 pages total.

Stop doing this: Stop evaluating content quality by word count. A 4,000-word article rated “Moderately Meets” won’t rank above a 1,400-word article rated “Highly Meets.” The scale measures satisfaction, not length. An article that fully answers the primary query in 200 words and then provides depth for follow-on questions will outperform an exhaustive article that buries the primary answer in paragraph seven.

For: SEO and Technical Teams

INP Is the New FID — and Most Audits Are Still Checking the Wrong Metric

The reframe: INP (Interaction to Next Paint) replaced FID (First Input Delay) as a Core Web Vitals metric in March 2024. The 2026 threshold is INP <200ms. If your audit stack still reports FID as the interactivity metric, it’s two years out of date. Sites with LCP above 3.0s saw 23% more traffic loss than faster competitors with equivalent content quality in the December 2025 Core Update. ALM Corp · 847 sites · directional Technical quality is now a competitive differentiator in the same analysis frame as content quality.

What you do: Run a three-layer audit: Core Web Vitals (INP <200ms, LCP <3.0s, CLS <0.1 in field data from CrUX — not Lighthouse alone); Schema coverage (Article, FAQ, BreadcrumbList, SpeakableSpecification — validate via Rich Results Test); Entity count (export named entities from your top 20 landing pages via a structured data validator and count Knowledge Graph-recognized entities — if the average is below 15, you have a citation gap that schema won’t fix).

Access barrier: INP is measured differently from FID — it requires testing under simulated interaction conditions. CrUX in Search Console is the most reliable source for field INP data, but lags by approximately 28 days. PageSpeed Insights provides lab INP via TBT as a proxy. These are different measurements — don’t treat them interchangeably.

Stop doing this: Stop reporting Domain Authority as the primary KPI in SEO dashboards. Its correlation to the AI Overview citation rate is r=0.18 — effectively noise for AI-era visibility. Replace it with: AI Overview inclusion rate, entity visibility score, and topic-cluster semantic completeness. A dashboard that prominently reports DA and doesn’t track AI Overview inclusion is optimizing for 2019 signals.

For: Marketing Leaders and Budget Decision-Makers

The 12–18 Month Attribution Problem No One Is Telling CFOs About

The reframe: The February 2026 Discover Core Update explicitly rewards “demonstrated topic expertise, evaluated topic by topic” — not domain-level authority. Your brand’s overall reputation no longer subsidizes weak content in adjacent topics. Every topic cluster earns its place independently. For budget allocation, this means concentrating content investment in 3–5 topic clusters where you can build genuine entity authority, rather than spreading moderate volume across 15–20 topics. December 2025 outcome data confirms this: specialist-depth content outperformed generalist-breadth content across the 847-site sample.

What you do: Restructure content investment around depth, not volume. Then set a specific expectation with your CFO: there’s a 12–18 month lag between topic-cluster content investment and measurable authority signals in data. Budget decisions made in Q1 2026 based on these standards won’t show AI citation ROI until mid-to-late 2027. Build the 18-month projection into the initial budget justification — before the first quarterly attribution cycle makes the numbers look flat.

Access barrier: The standard quarterly attribution model doesn’t capture topical authority-building timelines. CFOs running 90-day ROI cycles will defund authority-building content before the compounding effect appears in data. This is a measurement problem more than a strategy problem.

Stop doing this: Stop measuring content success primarily by organic traffic clicks from search. AI Overviews reduce click-through rates by 34.5% on average for searches where they appear. Ahrefs, cited by eMarketer A piece cited in an AI Overview generates brand influence at scale — appearing in front of users who don’t click — while showing traffic decline in Search Console. Teams cutting content budgets based on post-update click data may be defunding their most-cited, highest-influence assets. Add AI Overview inclusion rate to your measurement stack before making that call.

Sources and Confidence Levels

| 85% of AI Overview citations from content published in the last two years; 44% from 2025 | Cited For | Confidence |

|---|---|---|

| Google Search Quality Rater Guidelines, Sept 11, 2025 | QRG section numbers; Needs Met scale; Page Quality scale; §4.6.3–§4.6.6 exact language; YMYL expansion; AI Overview criteria addition | Verified — primary source |

| Google Search Central Blog, February 2026 | February 2026 Discover Core Update; three stated goals; start date (Feb 5) and completion date (Feb 27); first-ever Discover-only designation | Verified — primary source |

| ALM Corp analysis of 847 sites across 23 industries (January 2026) | December 2025 Core Update traffic loss by content type; LCP performance correlation (23% additional loss) | Moderate — named agency analysis; not peer-reviewed |

| SE Ranking SERP analysis (December 2025 Core Update) | 15% of prior top-10 pages disappeared from top 100 | Moderate — population not fully disclosed |

| Wellows.com, analysis of 15,847 AI Overview results, 63 industries | All AI Overview citation factors: semantic completeness (r=0.87), multi-modal (r=0.92), entity density (4.8×), E-E-A-T (96%), DA (r=0.18), passage length (134–167) | Directional — industry study; not peer-reviewed |

| Ahrefs AI Mode analysis (cited by eMarketer, 2025) | Wikipedia AI Mode citation count (11.22%); CTR reduction from AI Overviews (34.5%) | Moderate — vendor-reported; no independent audit found |

| Search Engine Land; Search Engine Roundtable (Barry Schwartz) | QRG §4.6.6 exact language confirmation; September 2025 QRG update reporting; February 2026 update confirmation | Moderate — credible trade journalism, Tier 2 evidence |

| SISTRIX Visibility Index (December 2025 Core Update data) | Wikipedia 435+ visibility point loss post-December 2025 Core Update | Moderate — named third-party tool; methodology disclosed |

| Seer Interactive analysis (June 2025) | 85% of AI Overview citations from content published in last two years; 44% from 2025 | Moderate — named agency; methodology disclosed |

Confidence levels: Verified = primary source confirmed at canonical URL. Moderate = named source with disclosed population or methodology. Directional = industry study without peer review — used as supporting evidence, not load-bearing claims.